3.03 Chord pattern and Timpani¶

- Using Chord pattern like in the versions 2.08 and 2.09

- Adding a easy drum function controlled by the volume.

- The range and the acceptance functions are not in use.

from pyknon.genmidi import Midi

from pyknon.music import Rest, Note, NoteSeq

from music_generation import*

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.ticker as plticker

from datetime import date

Transform Meteorological data¶

def scale(a): return (a-a.min())/(a.max()-a.min())

def read_meteo_data(fName):

colNames = ['Stao','time', 'T_Boden_20cm', 'V_Windböe', 'T_Chill', 'Flash_30km', 'Glob_rad', 'QFE','T_2m','Flash_3km','Rain_Sum','Rain_intens','H_rel','visibi','V_wind','stabw_V_wind','direction_wind']

df = pd.read_csv(fName,sep=';', skiprows=3, names=colNames, na_values='-')

print(df.head())

return df

fPath = '/mnt/daten/04_Schule/42_Kanti/Matrua/Music_generation/Organisation/MeteoSchweiz/Daten/'

fName = 'order_74947_data.txt'

dM = read_meteo_data(fPath+fName)

#---- Parameter bestimmen -----------

NT, MP = dM.shape

print('-----------------')

print('NT, MP', NT, MP)

Stao time T_Boden_20cm V_Windböe T_Chill Flash_30km Glob_rad 0 KLO 201908270000 20.4 1.2 14.2 0 2

1 KLO 201908270010 20.4 0.9 14.4 0 2

2 KLO 201908270020 20.4 1.1 14.4 0 1

3 KLO 201908270030 20.4 0.8 13.8 0 2

4 KLO 201908270040 20.4 0.5 14.2 0 2

QFE T_2m Flash_3km Rain_Sum Rain_intens H_rel visibi V_wind 0 968.2 14.2 0 0.0 0.0 99.6 6626.0 0.7

1 968.2 14.4 0 0.0 0.0 98.5 1277.0 0.5

2 968.2 14.4 0 0.0 0.0 98.6 4900.0 0.5

3 968.3 13.8 0 0.0 0.0 99.0 7417.0 0.6

4 968.2 14.2 0 0.0 0.0 99.7 981.0 0.4

stabw_V_wind direction_wind

0 9 110

1 40 198

2 28 333

3 4 326

4 30 256

-----------------

NT, MP 2160 17

Chords and scales

major = np.array([ 0, 2, 4, 5, 7, 9, 11])

minor = np.array([ 0, 2, 3, 5, 7, 8, 10])

C7 = np.array([ 0, 4, 7, 10])

CM7 = np.array([ 0, 4, 7, 11])

Cm7 = np.array([ 0, 3, 7, 10])

Cm = np.array([ 0, 3, 7])

Cdim = np.array([ 0, 3, 6])

C = np.array([ 0, 4, 7])

power= np.array([ 0, 7])

B= np.array([ 0])

met_transform

- the rolling mean is to remove noise on the data.

- the factors are used to scale the melody, such that it plays in a certain range

- start defines the staring point of the melodies by removing the begin of the data

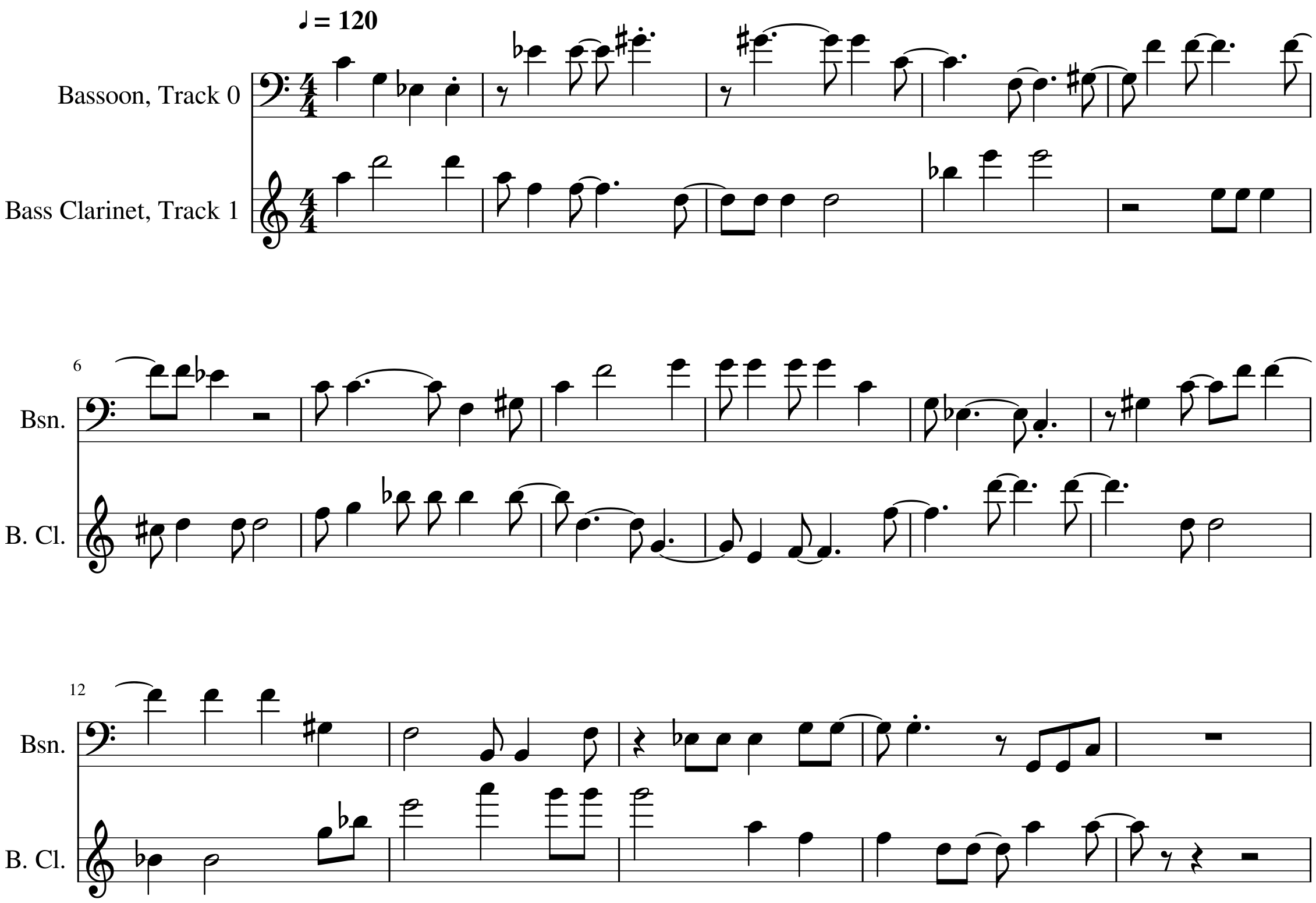

Tune_W¶

- This tune uses the temperature and humidity

- With bassoon and clarinet

- Chord pattern Cm Ab Fm Ddim G7 Cm Fm G7

def tune_W():

tune_name = 'tune_W'

np.random.seed(39) #56

bar, bpb = 15, 4 # bar: Takt , bpb: beat per bar

melody_len = bar * bpb

mpb = 120 #minutes per beat.

start =10 # start in hours

# met_transform: [Factor for each data serie] ,[numbers of value for the rolling mean]

trans = met_transform(dM,[1,1,1,1,0.5,1,0.38,1,1,1,0.1,1,4.5,1,1,],[6,6,6,6,6,6,6,6,6,6,6,6,6,6,2],start)

scales = [[1,Cm],[1,C+8],[1,Cm+5],[1,Cdim+2],[1,C7+7],[1,Cm],[1,Cm+5],[1,C7+7]]

end_scale = [[1,Cm],[1,power]]

pattern = pattern_gen(scales, end_scale, melody_len)

# humidity

range_1 = liniar_range(0,0,0,0) # not in use

rythem1, notenr_1 = ran_duration([1/16,1/8, 1/4,1/2], [0,2,3,1], melody_len)

melody1 = meteo_melody(trans[10],pattern, 60, range_1, notenr_1, rythem1,mpb)

volumes1 = ran_volume([0,120], [1,8], notenr_1 )

notes1 = NoteSeq( [Note(no,octave=0, dur=du, volume=vo) for no,du,vo in zip(melody1,rythem1,volumes1)] )

# temperature

range_2 = liniar_range(0,0,0,0)

rythem2, notenr_2 = ran_duration([1/16,1/8, 1/4,1/2], [0,2,3,2], melody_len)

melody2 = meteo_melody(trans[6],pattern, 66, range_2, notenr_2, rythem2,mpb)

volumes2 = ran_volume([0,120], [1,8], notenr_2 )

notes2 = NoteSeq( [Note(no,octave=0, dur=du, volume=vo) for no,du,vo in zip(melody2,rythem2,volumes2)] )

instruments = [70,71]

notes = [notes1,notes2]

return notes, instruments,tune_name

tune_W

tune_W

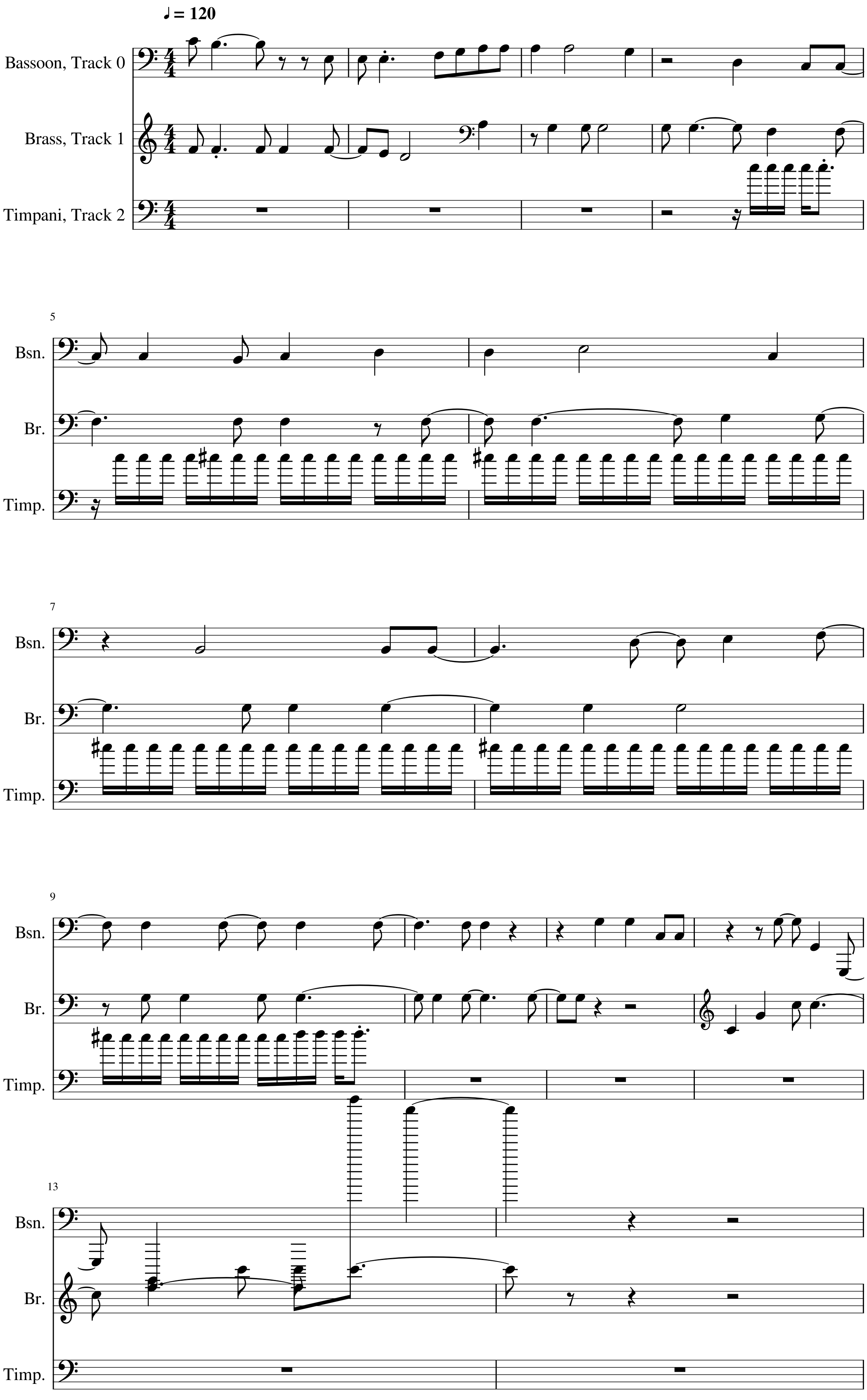

tune_W Tune_X¶

- Major-scale

- it is the rain of the 8.09.2019. See 3.03.1 Display meteorological data

- Air-pressure and temperature as melody.

- Air-pressure and temperature are during this rain sequence relatively constant. So the melody does often play the same note

- The volume of the timpani is controlled by the amount of rain. The data is magnified by a large factor. The peaks are then cut of. Otherwise the drum would only be heard for a short moment.

Met_percus¶

- met_percus is an function to create an easy drum

- the played note can be a single note or an list of several notes which is repeated

def met_percus(meteo, note,frequ,volume, melody_len, mpb):

note_nr = int(melody_len/(frequ*4))

#print(melody_len,mpb,note_nr)

rythem = np.repeat(frequ,note_nr)

melody = np.repeat(note,note_nr/len(note))

volume = np.zeros(note_nr, dtype=int)

for npn in range(note_nr): #npn: note per note (index)

met_resolution = 10

beat_nr = npn*frequ*4 #find beat nr

i_met = np.round((beat_nr*mpb)/met_resolution).astype(int) # calulate index of the data array

vol = meteo[i_met] # take the diffrence of the data

vol = np.round(vol).astype(int) # round to an int

volume[npn]= vol

volume = np.where(volume > 127, 127, volume)

return melody, rythem, volume

def tune_X():

tune_name = 'tune_X'

#np.random.seed(56)

bar, bpb = 13, 4 # bar: Takt , bpb: beat per bar

melody_len = bar * bpb

mpb = 60 #minutes per beat.

start =276 # start in hours

trans = met_transform(dM,[1,1,1,1,1,4,0.8,1,500,1,0.2,1,4.5,4,1,],[6,6,6,6,6,6,6,6,6,6,6,6,6,6,2],start)

scales = [[8,major]]

end_scale = [[3,power]]

pattern = pattern_gen(scales, end_scale, melody_len)

# Pressure

range_1 = liniar_range(0,0,0,0)

rythem1, notenr_1 = ran_duration([1/32,1/8, 1/4,1/2], [0,2,3,1], melody_len)

melody1 = meteo_melody(trans[5],pattern, 60, range_1, notenr_1, rythem1,mpb)

volumes1 = ran_volume([0,100], [1,8], notenr_1 )

notes1 = NoteSeq( [Note(no,octave=0, dur=du, volume=vo) for no,du,vo in zip(melody1,rythem1,volumes1)] )

# temp

range_2 = liniar_range(0,0,0,0)

rythem2, notenr_2 = ran_duration([1/16,1/8, 1/4,1/2], [0,2,3,2], melody_len)

melody2 = meteo_melody(trans[6],pattern, 65, range_2, notenr_2, rythem2,mpb)

volumes2 = ran_volume([0,100], [1,8], notenr_2 )

notes2 = NoteSeq( [Note(no,octave=0, dur=du, volume=vo) for no,du,vo in zip(melody2,rythem2,volumes2)] )

#timpani rain

# met_percus( meteo-data, )

melody3, rythem3, volumes3 = met_percus(trans[8], [72,73,74], 1/16, [30,120], melody_len, mpb)

notes3 = NoteSeq( [Note(no,octave=0, dur=du, volume=vo) for no,du,vo in zip(melody3,rythem3,volumes3)] )

instruments = [70,61,47]

notes = [notes1,notes2,notes3]

return notes, instruments,tune_name

tune_X

tune_X

tune_X Instruments: Available are at lest the 128 General-Midi (GM) Instruments. Depending on the sound-fonts there is a bigger choice. A list of the GM instruments can be found here. https://jazz-soft.net/demo/GeneralMidi.html

Generate Midi and Audio file¶

def gen_midi():

# squezze into a MIDI framework

notes, instruments, tune_name = tune_W() # <--- select a tune <<-- <<<<<<<<<--- select a tune -----

nTracks = len(notes)

m = Midi(number_tracks=nTracks, tempo=120, instrument=instruments)

for iTrack in range(nTracks):

m.seq_notes(notes[iTrack], track=iTrack)

#--- write the MIDI file -----

midi_file_name = tune_name +'.mid' # set the name of the file

m.write(midi_file_name)

return midi_file_name

######--- Main ---######

midi_file_name = gen_midi()

midi_play(midi_file_name)

midi_audio(midi_file_name)

midi_png(midi_file_name)

External Music_Generation library¶

This library changes from version to version. New or changed code is first explained above. This is a copy of music_generation.py

from pyknon.genmidi import Midi

from pyknon.music import Rest, Note, NoteSeq

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.ticker as plticker

from datetime import date

# [[[[[[[[[[[[[[[[[[[ -- Functions for Music Generation -- ]]]]]]]]]]]]]]]]]]]

def scale_create(tones):

tones = np.asarray(tones) # tones which form chord or scale in the first octave (0-11)

if any(tones > 11): # tones over one octave?

tones = np.mod(tones,12) # set the thones in one octave

tones = np.sort(tones) # sort the tones new

tones = np.unique(tones) # remove duplicate tones

octave = np.repeat( np.linspace(0,108, num=10), len(tones))

scale = np.add( octave, np.tile(tones, 10)) # add element wise octave and note

return scale.astype(int)

def fade(start,end,steps):

fade = np.around( np.linspace(start,end,num=steps))

fade = fade.astype(int)

return fade

def ran_volume(volume, prob_volume, melody_len):

volume = np.asarray(volume, dtype=int) # this are the allowed volumes of thenotes

prob_volume = np.asarray(prob_volume) # this are the probabilities how often each volume will occure

prob_volume = prob_volume/np.sum(prob_volume)

volumes = np.r_[np.random.choice(volume, size=melody_len, p=prob_volume)]

return volumes

# liniar_range: Generates an range in which the instrument can play.

def liniar_range(r_start, r_top, r_edge, r_end): # acceptance range of the instrument

h = 100 # hight of acceptance function

a_range = np.zeros(121, dtype=int) # only to midi =120 as 127 is not a complete octave

np.put(a_range, range(r_start,r_top), np.linspace(0,h, num=(r_top -r_start)) )

np.put(a_range, range(r_top, r_edge), np.linspace(h,h, num=(r_edge-r_top )) )

np.put(a_range, range(r_edge, r_end), np.linspace(h,0, num=(r_end -r_edge )) )

return a_range

# i_last_note: finds de i value of the last not in the actual scale.

def i_last_note(note, scale):

i_note = (np.abs(scale - note)).argmin()

return i_note

# intvl_next is a modification of intvl_melody. But it does only creats one interval and not an array/melody in one time.

def intvl_next(intvl, prob_intvl): #singel interval

intvl = np.asarray(intvl) # Possible interval

prob_intvl = np.asarray(prob_intvl) # Probability of each interval

prob_intvl = prob_intvl/np.sum(prob_intvl)

interval = np.random.choice(intvl, size=1, p=prob_intvl)

return interval[0]

# acceptance: accepts and refuses proposed nots with Metropolis-Hasting Algorythem.

# x is the value in the aceptance range of the current note, while x_new is it from the proposoal note

def acceptance(x, x_new):

if x_new < 1:

if x < 1: print('start_note not in range') ; x = start_note_not_in_range

quot = x_new/x

if quot >= 1: return True

if np.random.uniform(0,1)< quot: return True

else: return False

def ran_duration(duration, prob_duration, melody_len):

duration= np.asarray(duration) # this are the allowed durations of the notes

prob_duration = np.asarray(prob_duration) # this are the probabilities how often each will occure

prob_duration = prob_duration/np.sum(prob_duration)

cumsum, melody_len, rythem = 0, melody_len/4 , np.asarray([]) #melody_len/4 as note values are quarter

while cumsum < melody_len:

note_len = np.random.choice(duration, p=prob_duration)

cumsum = cumsum + note_len

rythem = np.append(rythem,note_len)

return rythem , len(rythem)

# pattern_gen takes the chord pattern (scales): it reapeats the pattern as long the melody is, and generates the beat number where the chords change.

# it also adds the end pattern

def pattern_gen(scales,end_scale, melody_len):

bpb = 4 # beats per bar

#--scales

scales = np.asarray(scales)

factor = int(np.trunc(melody_len/(np.sum(scales[:,0]) * bpb)) + 1) # factor rounded up: how many times is the pattern used

change_times = np.cumsum(np.tile(scales[:,0],factor)) * bpb # create change time list with factor

change_times = np.concatenate((np.asarray([0]),change_times))[:-1] # add 0 at beginig remove last element

for i in range(len(scales)): # send scales to scale_create

scales[i,1] = scale_create(scales[i,1])

pattern = np.tile(scales,(factor,1)) # tile the scales as long the melody is

pattern[:,0] = change_times #insert change_times into scales

#--end_scales

end_scale= np.asarray(end_scale)

end_times = melody_len - np.cumsum(( end_scale[:,0]*bpb )[::-1])[::-1] # reversed cumsum subtracted of melody_len

end_scale[:,0] = end_times #insert end_times into en_scale

for i in range(len(end_scale)): # send end_scale to scale_create

end_scale[i,1] = scale_create(end_scale[i,1])

#--merge

pattern = np.delete(pattern, np.argwhere(pattern[:,0] >= end_scale[0,0]) ,0) # remove unneeded scales

pattern = np.concatenate((pattern,end_scale),axis=0)

pattern = np.delete(pattern, np.argwhere(pattern[:,0] >= melody_len) ,0) # remove if end is 0 bars

return pattern

def acceptance_melody(intvl, prob_intvl, pattern, start_note, a_range, notenr, rythem):

melody = np.zeros(notenr, dtype=int)

cum_rythem = np.cumsum(rythem) *4

cum_rythem = np.concatenate(([0],cum_rythem))[:-1] # add 0 at beginig remove last element

scale_change = pattern[:,0]

scale_nr =0

scale = pattern[scale_nr,1]

melody[0] = scale[i_last_note(start_note,scale)]

for npn in range(1, notenr): #npn: note per note (index)

scale_nr = np.ravel(np.argwhere(scale_change <= cum_rythem[npn-1])) [-1]

scale = pattern[scale_nr,1]

accept = False

while not accept: # aslong acept == False

inote = i_last_note(melody[npn-1],scale)

inote_next = inote + intvl_next(intvl, prob_intvl) # add current not with Proposition

accept_val = a_range[[melody[(npn-1)],scale[inote_next]]] # get acceptance values

accept = acceptance(accept_val[0],accept_val[1])

melody[npn] = scale[inote_next]

return melody

# plot_range: plot all ranges together

def plot_range(ranges,labels,title):

fig, ax = plt.subplots()

plt.xlabel('Midi Note')

plt.ylabel('Acceptance')

plt.title(title)

for a_range, lab in zip(ranges,labels):

ax.plot(range(121), a_range,label= lab )

ax.vlines(x=np.linspace(0,108, num=10), ymin=0, ymax=10, color='grey', label='Octaves',linewidth=1) # plot octaves

plt.legend()

plt.show()

def meteo_melody(meteo, pattern, start_note, a_range, notenr, rythem,mpb):

melody = np.zeros(notenr, dtype=int)

cum_rythem = np.cumsum(rythem) *4

cum_rythem = np.concatenate(([0],cum_rythem)) # add 0 at beginig

scale_change = pattern[:,0]

scale_nr =0

scale = pattern[scale_nr,1]

melody[0] = scale[i_last_note(start_note,scale)]

for npn in range(1, notenr): #npn: note per note (index)

scale_nr = np.ravel(np.argwhere(scale_change <= cum_rythem[npn-1])) [-1]

scale = pattern[scale_nr,1]

# find interval

met_resolution = 10

inter = np.asarray([cum_rythem[npn-1], cum_rythem[npn]]) # get beat_nr's

inter = np.round((inter*mpb)/met_resolution).astype(int) # calulate index of the data array

intvl = meteo[inter[1]] - meteo[inter[0]] # take the diffrence of the data

intvl = np.round(intvl).astype(int) # round to an int

inote_befor = i_last_note(melody[npn-1],scale) # get i in the scale of the last note

inote = inote_befor + intvl # calculate i in scale of note

melody[npn] = scale[inote] # set in to melody

plt.plot(cum_rythem[1:],melody) ; plt.xlabel= ('beat nr.'); plt.ylabel=('midi note nr')

return melody

# [[[[[[[[[[[[[[[[[[[ -- Functions for Meteo Transformation -- ]]]]]]]]]]]]]]]]]]]

# takes the rolling mean and interpolates the meteo data for each colunm

def met_transform(dM,factors,means,start):

col_nr = dM.shape[1]-2

start = int(start*6)

cut_border = np.trunc((np.amax(means))/2).astype(int) # calculate nr of nan at the border because of the rolling mean

cut_begin = np.amax([cut_border,start])

trans = np.zeros((col_nr, (dM.shape[0] -cut_border -cut_begin)))

if col_nr != len(factors) or col_nr != len(means): print('dM,factor,mean not same length')

for nr,factor, mean in zip(range(col_nr),factors,means):

Yw = np.array(dM[dM.columns[nr +2]].rolling(window=mean,center=True).mean()) # nr+2 the first two colums are location and date.

Yw = Yw * factor

trans[nr] = Yw[cut_begin: -cut_border] # remove nan at begining and end. because of rolling mean

return trans

# [[[[[[[[[[[[[[[[[[[ -- Functions for Sound generation -- ]]]]]]]]]]]]]]]]]]]

import subprocess

default_soundfont = '/usr/share/sounds/sf3/MuseScore_General.sf3'

def midi_play(midi_in, soundfont= default_soundfont):

subprocess.call(['cvlc', midi_in , 'vlc://quit', '--soundfont', '/home/viturin/-vitis/Documents/MuseScore2/Soundfonts/Compifont_13082016.sf2']) # cvlc = vlc without gui

def midi_audio(midi_in, name_out = 'none', soundfont= default_soundfont):

if name_out == 'none' :

name_out = midi_in.replace('.mid', '.flac')

else:

name_out = name_out + '.flac'

subprocess.call(['mscore', '-o', name_out, midi_in]) # -o = export as

def midi_png(midi_in, name_out = 'none'):

if name_out == 'none' :

name_out = midi_in.replace('.mid', '.png')

else:

name_out = name_out + '.png'

subprocess.call(['mscore', '-o', name_out, '-T', '2', midi_in]) # -o = export as , -T 2 = cut page with 2 pixel